Source RAND Corporation

Citizen scientists gathered in Berlin this last week for ECSA 2016 which was the first International Citizen Science Conference aimed at policy makers, science funders, scientists, practitioners, NGOs and interested citizens. It was a major event during which an open journal was announced as well entitled Citizen Science: Theory and Practice.

Curated Citizen Data

The advances in citizen science as valuable technique in actual research programs is making solid progress and researchers become increasingly interested in data sets gathered by citizens. As this trend develops, questions of data quality and curation remain obviously top-of-mind. I couldn’t fail to notice how http://crowdsignals.io/ is proposing a model whereby researchers can fund data collection efforts while ensuring part of the crowdfunds make it to the citizens themselves. A typical such project could be like EPFL’s SenseCityVity which collected and curated data on how youngsters perceive their urban environment.

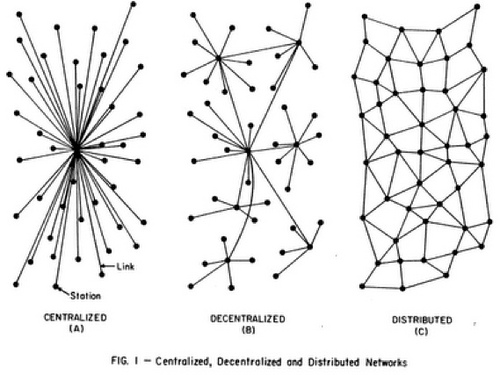

Citizen sensing is generally a local endeavour addressing questions of urban planning, biodiversity, security, or pollution that play out in specific ways across locations. When organizations propose today to collate and facilitate access to data, it will be based on established principles of today’s Internet: centralized.

Cloud infrastructures have made it easy to launch services which are designed to grow through the number of projects they list, photos they hold, or profiles they store. All that data remains under the control of one organization whose terms & conditions we usually accept without reading and operate under data privacy laws which may differ fundamentally. That goes for Twitter, SciStarter, or Kickstarter as examples. Even to reach a web site, we forget that we go through a centralized service called DNS which resolves friendly names to IP addresses.

Extreme Distribution

To be fair, the domain name system and many web sites which are today growing significantly are built in a distributed fashion spreading their servers across hosting facilities. But they are still under the control of another organization.

The folks at https://unhosted.org/ have been thinking about such considerations for a while now. They have tackled specific use cases which allow your browser to interact with a web site but retain control over the data by keeping it locally. But not all use cases lend themselves to this model.

Imagine you wanted to manage a ledger which holds the transfers of a virtual currency from one user to another. This problem was addressed by bitcoiners using cryptographically signed transactions stuffed into a local ledger database which is then shared with others. As the ledger acts as common source of truth, changes must be propagated and a consensus reached. To achieve this, the protocol forces participating computers to perform long calculations to claim changes to the ledger. That new set of transactions, along with the proof of work is broadcast to others which then check the order of transactions. The complexity of the calculation is updated regularly to ensure a minimum time during which nodes have time to broadcast changes. As this process is subject to network latencies, ordering inconsistencies occur and the protocol’s rule mandates that the longest (most difficult) sequence of changes wins.

Traditional solutions bury the database deep into a hosting facility and mediate changes to it via the application (aka middleware) that sits between the browser and that database. Bitcoiners manage to run a currency with any Tom, Dick and Harry holding a full copy and achieving consensus on the order of transactions.

Project Douglas

A little more digression from curated citizen sensing datasets. I came across the Project Douglas team back in 2014 while learning about Ethereum with e.g. Primavera De Filippi’s talk at Berkman Center “Freenet or Skynet?”. Ethereum pushed the BitCoin architecture one notch further by introducing the ability to run code inside that distributed database.

Code as in programmable logic with if statements, loops, variables and all that good stuff. These scripts are cryptocontracts of sorts and the brainchild of Nick Szabo who came up with Smart Contracts back in 1997. Vitalik Buterin and Gavin Wood effectively implemented that concept along with alternative ways of reaching consensus into Ethereum which is not only a distributed database but effectively a specialized very large computing entity.

Enter Project Douglas. It’s end of 2014 and final stretch for the inception of Ethereum. Many hackathons, meetups and hangouts are keeping teams busy. Among those, the Project Douglas gang, which includes actual Lawyers, was quick to recognize the potential of smart contracts and rapidly proposed C3D or Contract Controlled Content Dissemination. That project would lead eventually to Eris Industries, now a key player in the cryptocontract space with tools to develop and run systems based on such distributed databases.

Eris had assembled a number of components for its C3D idea, including Ethereum but also IPFS for the storage of actual content. One of their later demos would include a distributed YouTube clone using IPFS to store videos. The most striking example at the moment of smart contracts in action is the Slock.it initiative to promote solutions for the sharing economy through connected locks which can activate a cryptocontract for the renting of a property such as a small appartment, a basement, or a bicycle.

As the locks, the gateways and a bunch of other deliverables need funding, a DAO or Decentralized Autonomous Organization was put in place to crowdfund capital which will allocate budgets to the various projects. That’s Ethereum folks eating their own dog food.

IPFS

Project Douglas had spotted Juan Benet’s IPFS initiative which felt like swallowing another small red pill hidden inside the first cryptosystems red pill. As a millennial educated in distributed systems by Stanford University, Juan looked at the Web he grew up with and proposed a new way to address content which departs from the centralized approach we know. IPFS or “Interplanetary File System” aims at making the “permanent web” possible by levering proven solution including distributed hash tables, Self-certifying File Systems, BitTorrent and Git. Instead of relying on DNS and universal resource identifiers (of which URLs are familiar to most), IPFS assigns a unique identifier to content it stores by means of hashing. Every piece of content is associated with its own hash or identifier. Each IPFS node is also associated with a pair of keys which is useful to identify the origin of the content by checking against a public key.

Project Douglas knew Ethereum is not meant to hold large amounts of data and had identified IPFS as distributed technology to host larger data files referenced by C3D smart contracts via their unique identifiers.

Unhosted Citizen Data

We are living in exciting times. While we tend to think of the Web in terms of Software-as-a-Service supported by cloud providers and DNS. I recall using Ning’s PHP sandbox for a content management system which was cool until they pivoted and pulled the plug on their sandbox and APIs. Revenue models remain core to platforms on the Internet and we tend to forget or ignore the trade-offs when we sign-up for their use.

The innovations brought by the unhosted movement, bitcoiners, IPFS present I believe a way to retain control over data I could collect with fellow citizens, either directly or via some sensor. Decentralized data technologies and the developments mentioned above show ways to retain control over the precious data being gathered. The datasets can become content-addressable on new kinds of networks. Networks which provide redundancy and implemented automated bargaining mechanisms to negotiate goals and requisite data. This data may be interesting for research but also for urban planning as shown by use cases presented by Swisscom at Smart Cities events in the area.

In a distant future, citizen sensing organized in hierarchical structures may act as feedback mechanism for more agile forms of government. Who knows?

In the end, smart contracts and distributed storage are just portions of the overall vision. As we’ll see with the DAO mentioned above, governance of distributed squads working towards a universal sharing network (or large-scale sensor journalism or urban planning or ecosystem conservation) will still require governance principles. I’m thinking of elements identified by Elinor Ostrom for CPR institutions or by the Viable System Model for cooperatives. But that would be a story for another time.

For now, stay alert for the envelope is being pushed, redefining work and production as we know them.

This material is licensed under CC BY 4.0

This material is licensed under CC BY 4.0